Published online: March 2021

Co-authored paper with Steve DiPaola and Hannu Töyrylä is available online at https://library.imaging.org/jpi/articles/4/2/jpi0139.

Abstract

Recent developments in neural network image processing motivate the question, how these technologies might better serve visual artists. Research goals to date have largely focused on either pastiche interpretations of what is framed as artistic “style” or seek to divulge heretofore unimaginable dimensions of algorithmic “latent space,” but have failed to address the process an artist might actually pursue, when engaged in the reflective act of developing an image from imagination and lived experience. The tools, in other words, are constituted in research demonstrations rather than as tools of creative expression. In this article, the authors explore the phenomenology of the creative environment afforded by artificially intelligent image transformation and generation, drawn from autoethnographic reviews of the authors’ individual approaches to artificial intelligence (AI) art. They offer a post-phenomenology of “neural media” such that visual artists may begin to work with AI technologies in ways that support naturalistic processes of thinking about and interacting with computationally mediated interactive creation.

———————-

The images below show some of the style-transfer study process from images in the paper.

Figure 1.

Content

Style

Figure 1 shows a mapping of content against style: The weight of the content image increases incrementally along the x-axis while style scale decreases along the y-axis. This provides a qualitative mapping of the style transfer latent space for the source images of interest.

In the first example the content is a neutral 50% grey rectangle and the style a simple gradient target. The intent here was to explore how a range of tones with small detail accents mapped into a generic content space, thus focusing on the behaviour of the style representation somewhat detached from the underlying content.

Figure 1b.

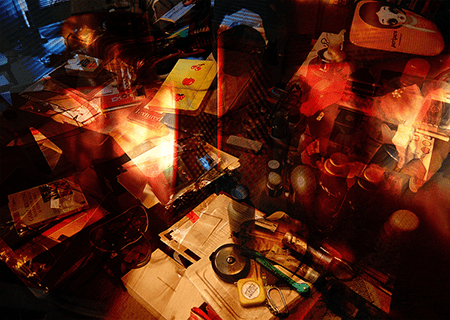

Overlay content

Overlay style

Figure 1b shows an overlay of a style and content image I chose from my ‘artist’s journal’ that I wished to map into this space. The overlay is only mapped into the regions of the original study that I found most promising (emotively, aesthetically “resonant”) for the development of a neural painting. The overlay images are from the same series as Figure 2 in the (—) paper.

Figure 1c.

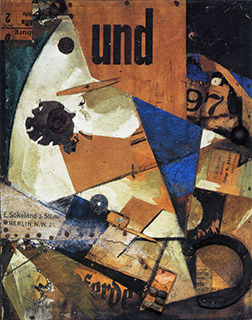

Content

Style

Figure 1c is another example using different style and content images. The style image is Kurt Schwitters’ Das Undbild and the content image is a stock photo of a former US president signing an executive order to much fanfare (he thought). This study led to When Schwitters met Trump, an image which was shown at the European Conference on Computer Vision (ECCV, 2018) in Munich, Germany, and which may be seen elsewhere on this site. This study is cut off because the content image cannot be displayed due to copyright reasons. I have also blurred the content image here for this reason. This does however bring up a very interesting question for AI image manipulation: At what point does copyright infringement “emerge”?